Dennis Miller, my favorite comedian once said, “How would you like to be a photographer for the National Enquirer? Is the lowest possible rung on the photo-journalistic ladder?” – I digress.

But it makes me think of today’s “backup admin”. Sure, there is still a requirement to protect data, but that mantra is changing rapidly. It is changing in two fundamental directions. First, there is the need for extremely fast data access. That access could be in the form of recovery, but it could use in the form of data access for new busienss use cases such as Test/Dev or DevOps, Analytics, Reporting or even Patch Management. The second requirement is the ability to enable secure, self-service to the people that need the access to the data. Aside from configuration, these new requirements all but put yesterday’s “backup admin” out to pasture.

Today’s backup administrator need a data protection solution that:

- Is simple

- Fast (to deploy, backup and recover)

- Reliable

- Can scale

- Enables self-service, but more importantly

- Has API’s that can integrate with other infrastructure automation and orchestration solutions

- Oh, and doesn’t cost a boat load

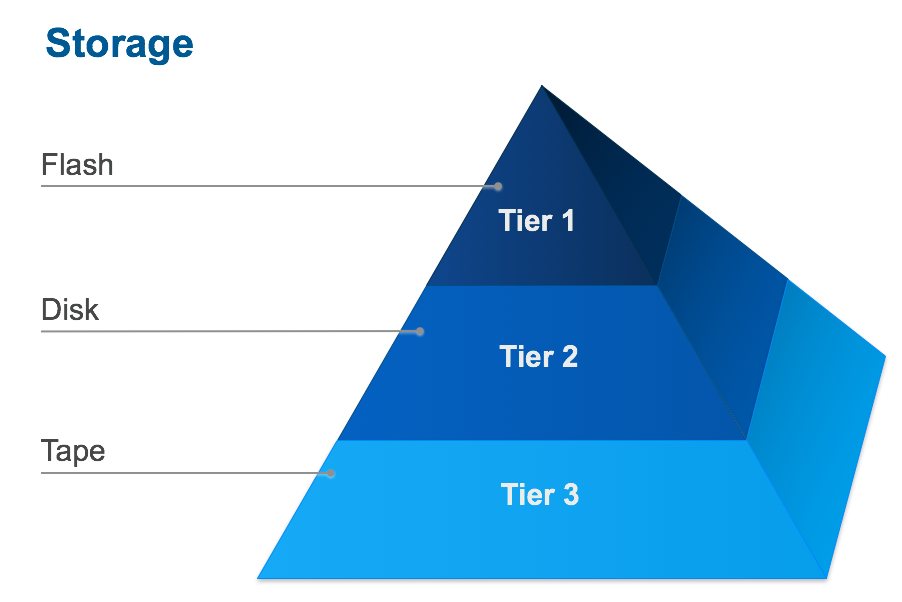

Additionally, I have always said, the hardest thing to change in IT is process, not technology. But we are at a tipping point when it comes to data protection and more so the recovery or access to data. When a new storage tier came on to the market called “Flash”, we augmented our processes a bit to accommodate this new tier to provide our applications better performance which in turn translated to competitive advantage.

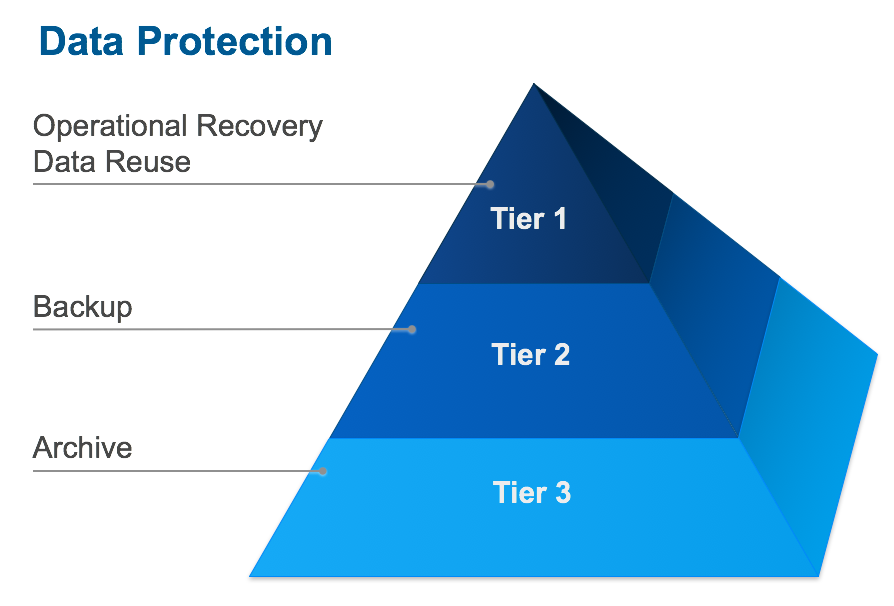

In much the same manner, there are new solutions available that enable a new class of data recovery called “Operational Recovery”. This is solving for the day to day request of access to data, either because it was lost or destroyed, or to have access to the most recent copy of production data to develop against or report on.

The evolution on the compute side with capabilities such as virtualization and containers have made it easier for data protection solutions to be able to move up the food chain. Taking advantage of the APIs provided in these solutions has enabled next generation data protection solutions to provide faster and easier backups making for better RPOs and RTOs. The containerization of the compute layer, again with the APIs, has also enable the ability to automate not just the backups (which you would expect) but the recoveries as well. Combine this with automation and orchestration solutions now make the use cases for data use much stronger.

This brings into question, why are “we” (the collective ‘IT’ we) trying to move strategic data management to the backup? It makes sense from a recovery perspective, but data reuse? The answer is simple. First, all the data in the enterprise typically lives in one collective place called the backup infrastructure. Second, can you name another tool in the environment that sees and has access to all the data? Couple these facts together with the capabilities of some of the new solutions on the market, today the data protection admin can help drive automation into the business and help other parts of the organization, such as development, move faster with things like data reuse templates and remove the need for other operations that plague IT such as shadow IT. Business today is no longer about big vs small but fast vs slow. New data protection solutions can enable businesses to move much faster and take advantage of the new corporate currency, data!